AI Video Generators 2026: 5 Tools You Won't Believe Exist

AI Video Generators 2026: 5 Tools Most People Aren’t Using Yet

If you’re bored of generic text-to-video demos, this is the other side of AI video in 2026: tools that edit real footage with prompts, redo moments without reshooting, and generate visuals you can drop straight into Shorts/Reels.

This guide is for YouTubers, social teams, and creators who want more control (and more rewatchable results) than the “standard” generators you’ve probably already tried.

Last updated: February 2026

By Greg Preece

I test AI video tools hands-on and break down what’s actually useful for creators (not just what looks cool in a demo).

TL;DR (Summary)

- Best for editing existing footage with text prompts: Kling O1

- Best for quick “informational visuals” you can drop into Shorts: Agent Opus

- Best for motion/gesture-driven generations: Kling Video 2.6

- Best for fixing a spoken mistake without a re-record: Descript (Video Regenerate)

- Best for re-doing a moment inside a shot (performance/dialogue): LTX Studio (Retake)

Quick comparison

| Tool | Best for | What you feed it | What you get back | Why it’s different |

|---|---|---|---|---|

| Kling O1 | Editing real footage with prompts | An existing video + a typed instruction | A revised clip | Add/remove/replace/restyle and change the “feel” without traditional masking |

| Agent Opus (OpusClip) | Short-form “informational visuals” | A prompt (and optionally assets like your face/logo) | Short, punchy visual clips | Fast motion-graphics style outputs without After Effects complexity |

| Kling Video 2.6 | Motion-driven character/video generation | A prompt + (optionally) motion/voice references | Realistic-style generated clips | More control via motion/gesture + voice-style direction |

| Descript (Video Regenerate) | Fixing a word/phrase in a talking-head | Your transcript edit | Corrected audio + updated mouth movement | Makes a “oops” word change look natural on camera |

| LTX Studio (Retake) | Re-doing a moment inside an existing shot | A video + a selected moment + direction | That moment regenerated (rest stays consistent) | “Redirect” a shot without a reshoot or full regen |

Table of contents

- How I tested these tools

- Quick links

- 1) Kling O1

- 2) Agent Opus (OpusClip)

- 3) Kling Video 2.6

- 4) Descript (Video Regenerate)

- 5) LTX Studio (Retake)

- Which one should you use?

- FAQ

Prefer to watch? Here’s the video. Prefer to skim? The full breakdown is below.

Quick links

- Kling O1: Try Kling O1 →

- Agent Opus: Try Agent Opus →

- Kling Video 2.6: Try Kling Video 2.6 →

- Descript: Try Descript →

- LTX Studio: Try LTX Studio →

How I tested these tools

Everything below maps to what I demonstrated in the video:

- Kling O1: Prompt-based edits on existing clips (add/remove/replace/restyle and camera-feel changes).

- Agent Opus: Generated short “stat/visual” style clips (including tests using my face as an input).

- Kling Video 2.6: Generated realistic clips with voice + movement/gesture influence.

- Descript: Replaced a mistaken word in my transcript and generated a fix that also updates mouth movement.

- LTX Studio (Retake): Selected a moment in an existing shot and redirected what happens in that moment.

1) Kling O1

Kling O1 is the one I’m most excited about if you already shoot footage and want an unfair advantage: you can take your real clip and make it more interesting by typing the change.

Think: adding/removing elements, changing weather/styling, and experimenting with the “camera feel” without doing a full manual VFX workflow.

Caption: A typical “video-to-video” edit: your original clip, your typed instruction, and the regenerated result.

Caption: A typical “video-to-video” edit: your original clip, your typed instruction, and the regenerated result.

Try it here: Try Kling O1 →

How to use Kling O1 (the simple workflow)

- Upload a clip you recorded (or an existing video you’re allowed to edit).

- Type one clear instruction (e.g., “remove the object on the table” or “make this scene rainy”).

- Generate a version, then iterate with one change at a time.

- When you like a result, export the clip and drop it into your normal edit.

What it’s best for

- Turning “fine” footage into something people rewatch (little surprises, cleaner composition, stronger vibe)

- Fixing distractions (removing something awkward in the frame)

- Trying alternate directions fast (different styling, atmosphere, or emphasis)

Practical tips (so your results don’t get weird)

- Keep prompts specific: one change per generation beats a huge paragraph.

- If you need multiple changes, do them in passes (remove → restyle → camera feel).

- Use this ethically: if you’re editing people, get consent—especially for commercial content.

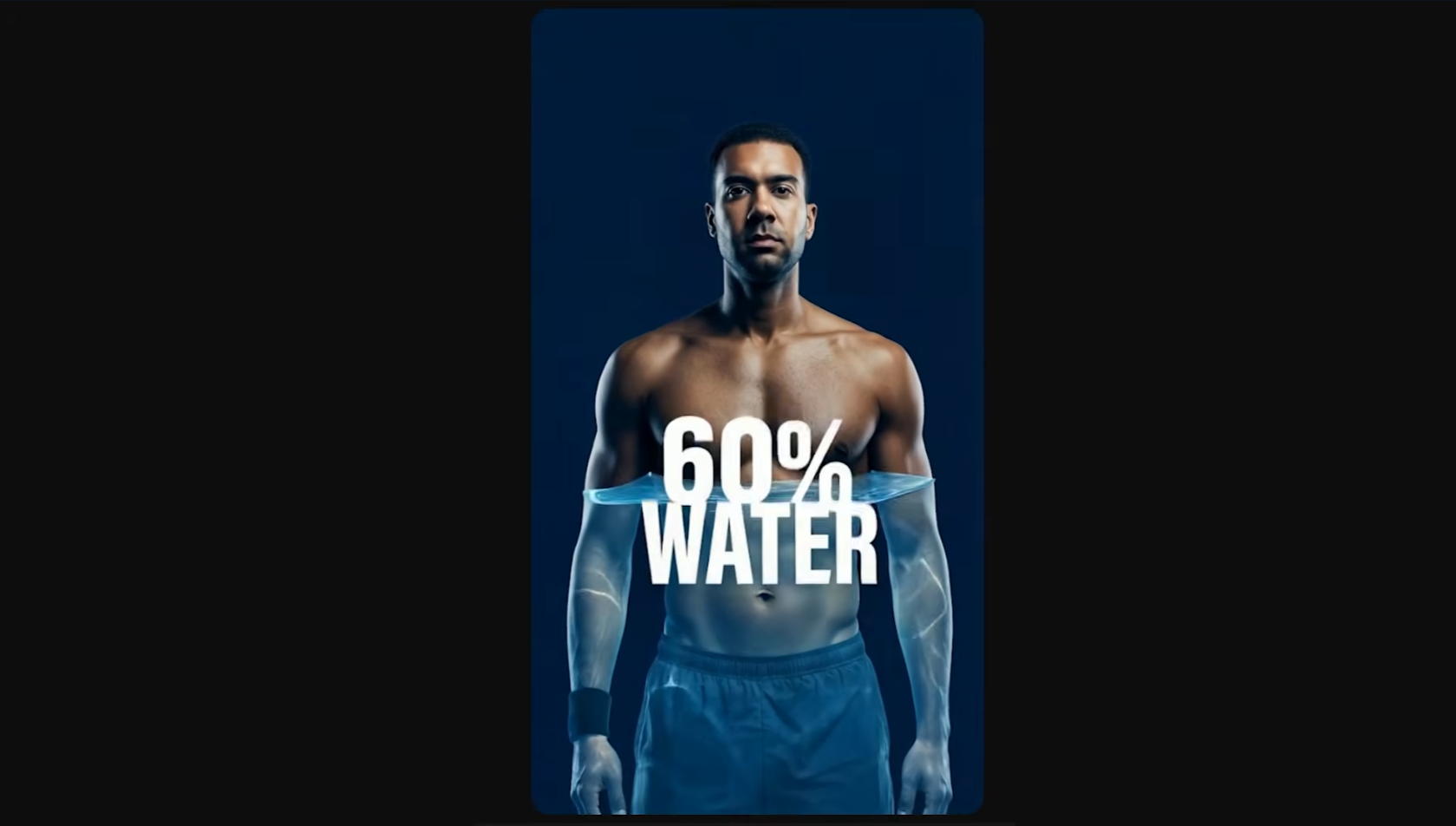

2) Agent Opus (OpusClip)

Agent Opus is the “make me a visual for this point” tool.

In the video, I used it to generate short, informational visuals you can drop into Shorts, TikToks, Reels, or even podcasts when you mention a stat or need a quick animation—without opening After Effects.

Caption: A short informational visual clip you can slot into a Shorts edit when you reference a stat or concept.

Caption: A short informational visual clip you can slot into a Shorts edit when you reference a stat or concept.

Try it here: Try Agent Opus → (click the "Agent Opus" link at the top)

How to use Agent Opus for “informational visuals”

- Write the one idea you need visualized (a stat, concept, or short story beat).

- Generate a few variations (different styles/visual approaches).

- Pick the best one and export the clip.

- Drop it into your edit as a pattern-break (hook → value → visual → next beat).

What it’s best for

- Making short-form more “watchable” by adding pattern interrupts

- Explainers and podcasts: visuals for stats, definitions, timelines, anatomy-style visuals, etc.

- Marketing snippets: quick visuals that support a claim without filming new footage

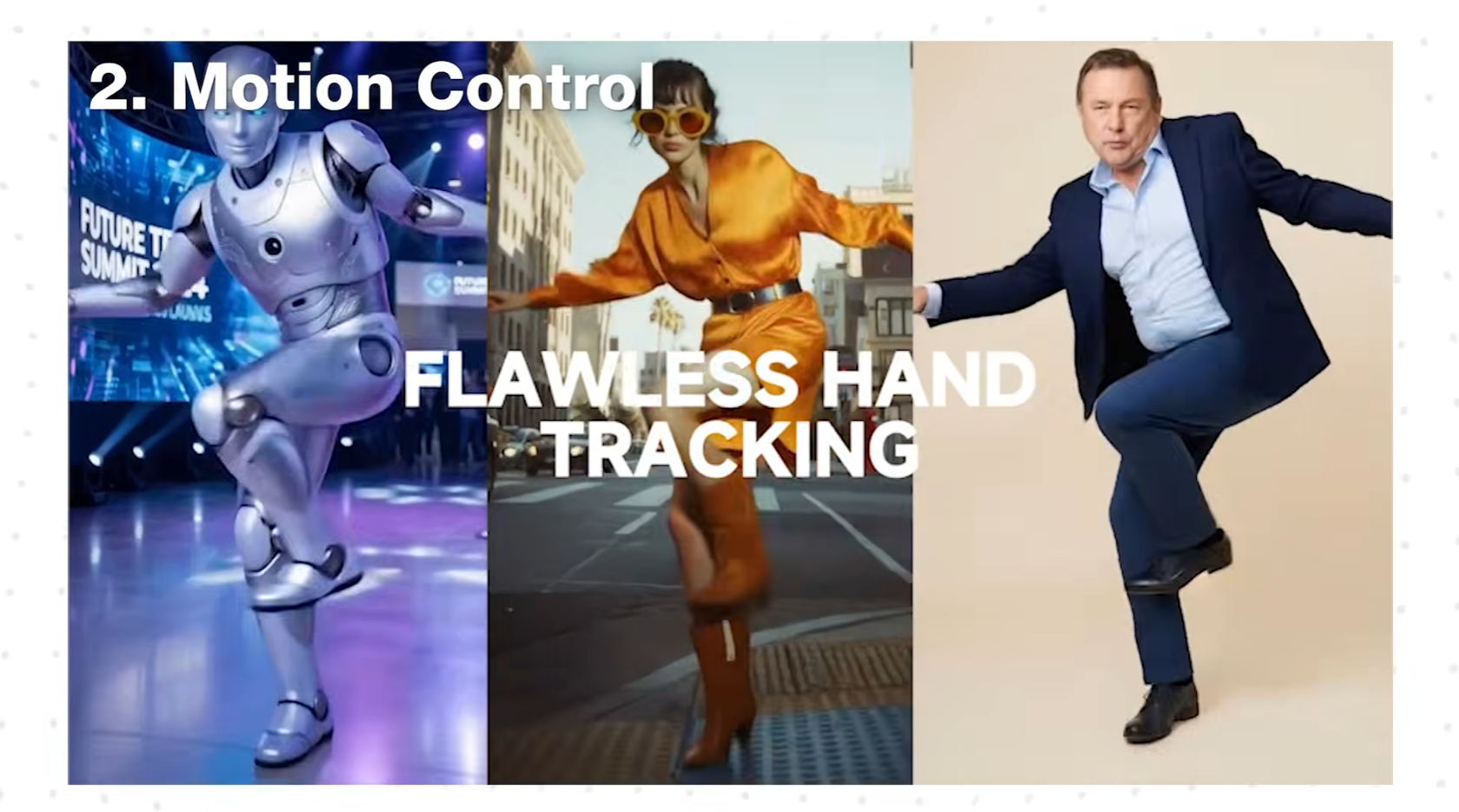

3) Kling Video 2.6

If Kling O1 is about editing what you already shot, Kling Video 2.6 is the “generated video, but with more of you in it” option.

In the video, I highlighted two angles:

- You can bake in voice (your voice or a created voice style)

- You can generate clips where the character follows your movements (expressions, gestures, body movement—hands included)

Caption: Motion/gesture-driven generation: you provide movement reference and the output follows that performance.

Caption: Motion/gesture-driven generation: you provide movement reference and the output follows that performance.

Try it here: Try Kling Video 2.6 →

A practical “creator” workflow for 2.6

- Record a movement reference (your facial expressions/gestures doing the performance you want).

- Define the character/scene you want in the output.

- Generate, then iterate on prompt details (wardrobe, environment, camera feel).

- Cut the best 1–2 seconds into Shorts as the hook, then transition into your real content.

What it’s best for

- Hooks where you want something cinematic/realistic, but still guided by your performance

- “Me, but in a different world” style intros (done responsibly)

- Creative experiments where motion sells the believability

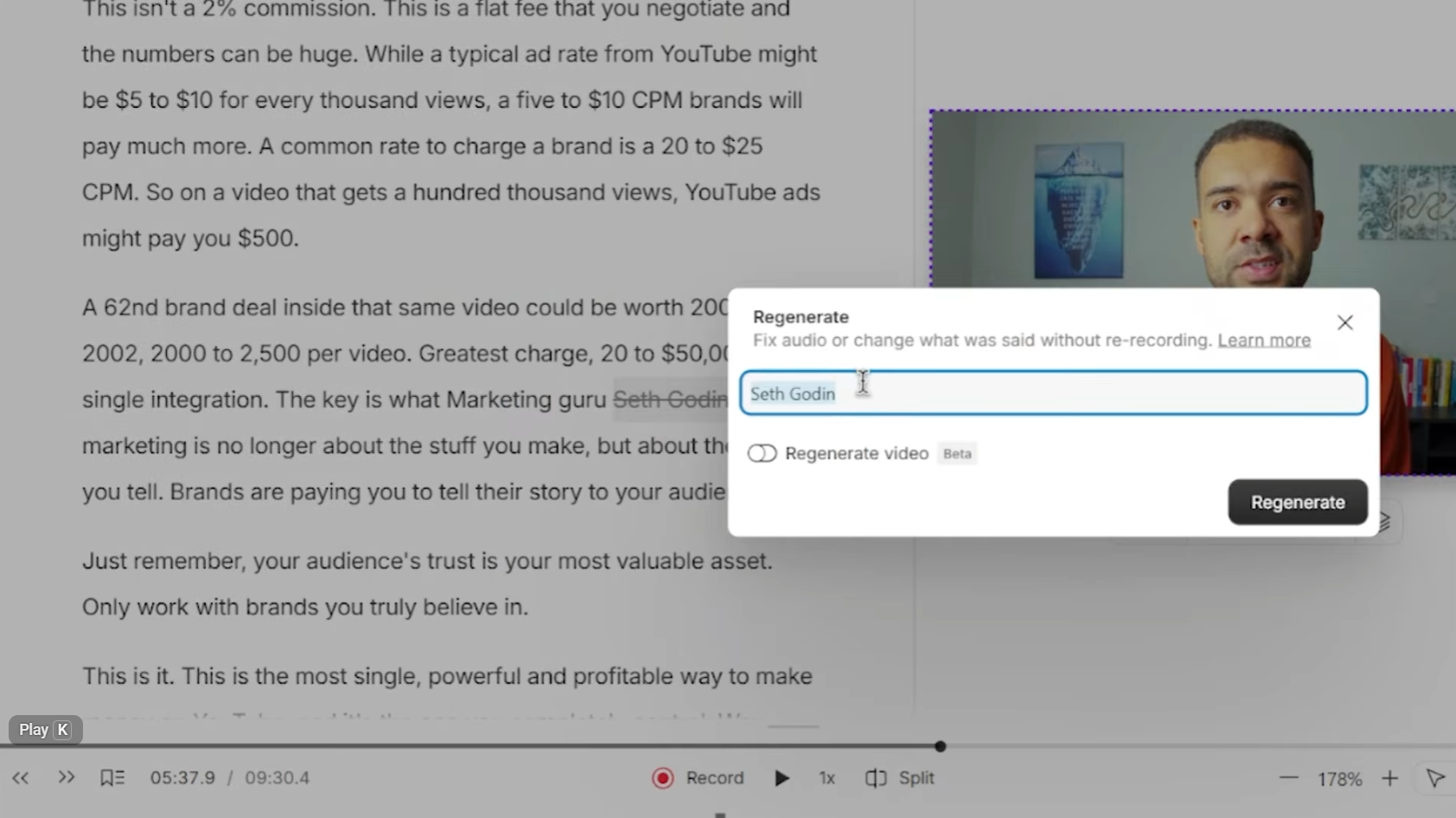

4) Descript (Video Regenerate)

This is one of the most useful kinds of AI video generation: fixing mistakes.

In the video, I showed a talking-head clip where I accidentally said the wrong word, then corrected it by editing the transcript. Descript updates:

- the audio to match the corrected word

- and your mouth movement so it looks like you said it correctly the first time

Caption: Edit the transcript, regenerate the line, and Descript updates the spoken word and mouth movement.

Caption: Edit the transcript, regenerate the line, and Descript updates the spoken word and mouth movement.

Try it here: Try Descript →

How to fix a wrong word (without reshooting)

- Import your clip and let Descript create the transcript.

- Click the wrong word/phrase and type the correction.

- Run Video Regenerate on that small section.

- Watch the result, then export the corrected clip.

What it’s best for

- Correcting a single word/phrase (names, dates, awkward phrasing)

- Tightening lines after the fact without “jump cut” surgery

- Saving a take you otherwise would’ve thrown away

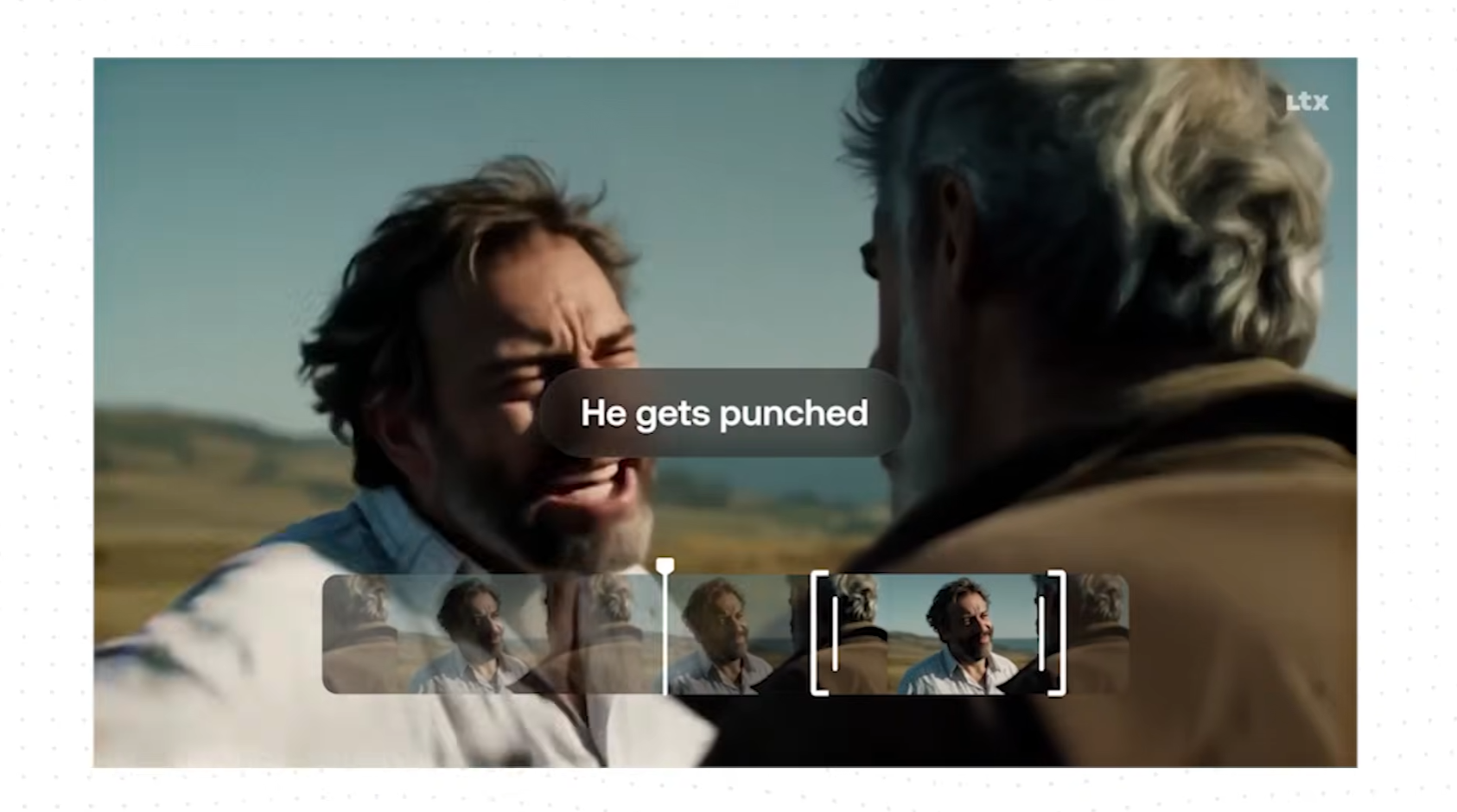

5) LTX Studio (Retake)

Retake is the “director tool” in this list.

The idea: choose a moment, type what you want to happen differently, and it regenerates that segment so you can redirect performance/dialogue without re-shooting—and without regenerating the entire scene.

Caption: Retake focuses on a selected moment inside a shot so you can redirect just that section.

Caption: Retake focuses on a selected moment inside a shot so you can redirect just that section.

Try it here: Try LTX Studio →

How to use Retake (moment-level redirects)

- Import your shot and identify the exact moment that’s not working.

- Select that segment and describe the change (dialogue/action/emotion).

- Generate and compare versions.

- Keep the best one, then move on—no reshoot, no full-scene redo.

What it’s best for

- Brand/marketing teams testing alternate messaging in the same footage

- Filmmakers refining performance beats

- Creators who want “one more take” without filming again

Which one should you use?

If you want the simplest decision rule:

- You already have footage and want to enhance it: start with Kling O1

- You need fast visuals to support talking points: use Agent Opus

- You want generated clips guided by your performance: try Kling Video 2.6

- You just need to fix what you said on camera: use Descript

- You want to redirect a moment like a director: use LTX Studio (Retake)

A good combo workflow for Shorts:

- Hook: Kling Video 2.6 (motion-driven “what am I watching?” moment)

- Value: your real talking-head / screen recording

- Pattern break: Agent Opus stat visual

- Cleanup: Descript to fix mistakes

- Enhancement: Kling O1 to upgrade a key shot

- If one beat is wrong: LTX Retake

FAQ

Are these “better” than the generators in Gemini or CapCut?

They’re different. Gemini/CapCut-style generators are often about quick generation. The tools above focus more on control (editing existing footage, retaking moments, motion/voice influence, transcript-based fixes).

Can I use my voice or someone else’s voice?

Some tools support voice-style generation. Use this responsibly: get consent, disclose when appropriate, and avoid impersonation.

What’s the biggest mistake people make with these tools?

Trying to do too much in one go. You’ll get better results with small, specific changes and iteration.

Will these replace video editing?

Not really. They’re best as “superpowers” inside a normal workflow: generate/retake/fix/enhance, then cut it properly in your editor.